Human-in-the-Loop for AI Agents: Best Practices, Frameworks, Use Cases, and Demo

- Share:

2938 Members

AI agents are no longer just passive observers in our applications—they’re active participants in our systems. With the rapid rise of LLMs, agents today can query APIs, modify infrastructure, respond to users, and trigger workflows. They’re increasingly embedded in customer support, developer tools, operations, and even decision-making pipelines.

But with this autonomy comes a critical question:

Can you trust an agent to act without oversight?

The short answer: no.

Agents may hallucinate actions, misinterpret prompts, or overstep boundaries. And when those actions touch sensitive systems—like access control, financial operations, or customer data—it's not a risk that you should take.

It also doesn’t mean that AI agents have no place in performing these actions. We all know that they will have the capability to do it sooner or later, and it’s only a question of how we adequately prepare for it, especially from a security perspective.

That’s where the concept of Human-in-the-Loop (HITL) comes in. By delegating final decisions to a human, developers can combine the efficiency of automation with the judgment of real people. That’s what we're here to talk about. This article explores:

Let’s get into it -

AI agents are incredibly useful—but they’re not infallible. Even the most advanced LLMs operate without true understanding. They can simulate reasoning, but they can’t evaluate risk, interpret social nuance, or take accountability for decisions.

When agents are empowered to take actions, not just generate text, that gap becomes a liability.

Now imagine those behaviors in the context of changing user roles in a production system, approving infrastructure changes in a CI/CD flow, accessing knowledge bases, deleting customer data, or issuing refunds. You can see how quickly risks can escalate.

Inserting humans at key decision points allows you to prevent irreversible mistakes before they happen, ensure accountability, so that every action has a reviewer or approver, comply with audit requirements, such as SOC 2 policies and internal governance, as well as build trust by making your AI a supervised assistant, instead of a a black box.

This isn't a nice-to-have - in many agentic workflows, HITL is the only responsible way forward.

When we talk about delegating permissions in AI systems, we don’t mean giving humans more buttons to press. We mean teaching agents to ask for permission—and wait.

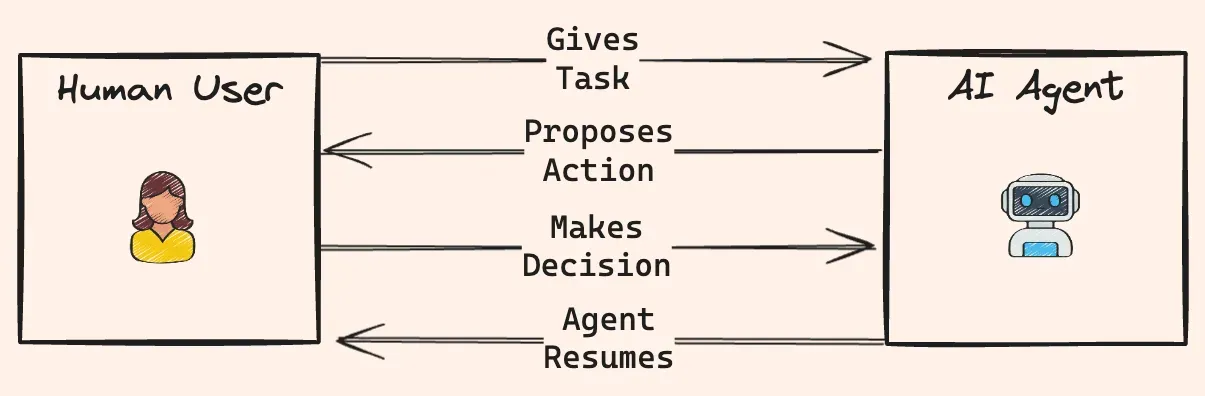

This is the core of human-in-the-loop (HITL):

The agent doesn’t act until a human explicitly approves the request.

The HITL workflow follows a predictable and repeatable pattern:

interrupt() .This pattern works across roles, industries, and use cases—from DevOps and security to customer service and compliance.

The agent ecosystem is evolving fast—and so are the tools designed to add human judgment into the loop.

Below is a breakdown of the most effective frameworks and libraries you can use today to build human-in-the-loop (HITL) workflows for AI agents. Each has a different focus, but all support some form of permission delegation or real-time human approval.

| Framework / Tool | Strengths | HITL Support |

|---|---|---|

| LangGraph | Graph-based control, modular nodes, native interrupt/resume support | interrupt() pauses the graph for human input |

| CrewAI | Multi-agent task orchestration with role-based design | human_input flag and HumanTool support |

| HumanLayer | SDK/API for integrating human decisions across channels (Slack, Email, Discord) | @require_approval() decorator and human_as_tool() support |

| LangChain MCP Adapters | Bridges LangChain agents with external access/approval flows like Permit.io | HITL when combined with LangGraph or LangChain callbacks |

| Permit.io + MCP | Real-world authorization engine with UI/API-based access and operation approvals | Built-in support for access requests and delegated approvals |

LangGraph is ideal for building structured workflows where you need full control over how an agent reasons, routes, and pauses. Its interrupt() function lets you pause the graph mid-execution, wait for human input, and resume cleanly, ****making it a top choice for inserting HITL checkpoints. Use it when you need custom routing logic, deterministic, debuggable behavior, or when you're managing multiple agents/tool types.

CrewAI focuses on collaborative, role-based agent teams. It’s great for decomposing tasks among agents with different goals or capabilities. HITL comes in via human_input, or by defining a HumanTool the agent can call for guidance. Use it when your workflow involves multiple agents, or when you want to keep humans in the loop as decision-makers or fallback experts.

The HumanLayer SDK enables agents to communicate with humans via familiar tools (Slack, Email, Discord). Its decorators (@require_approval, human_as_tool) wrap functions to make approval logic seamless. Use it when you want asynchronous human decisions, notifications/multi-channel communication, or when you need an orchestration-agnostic implementation.

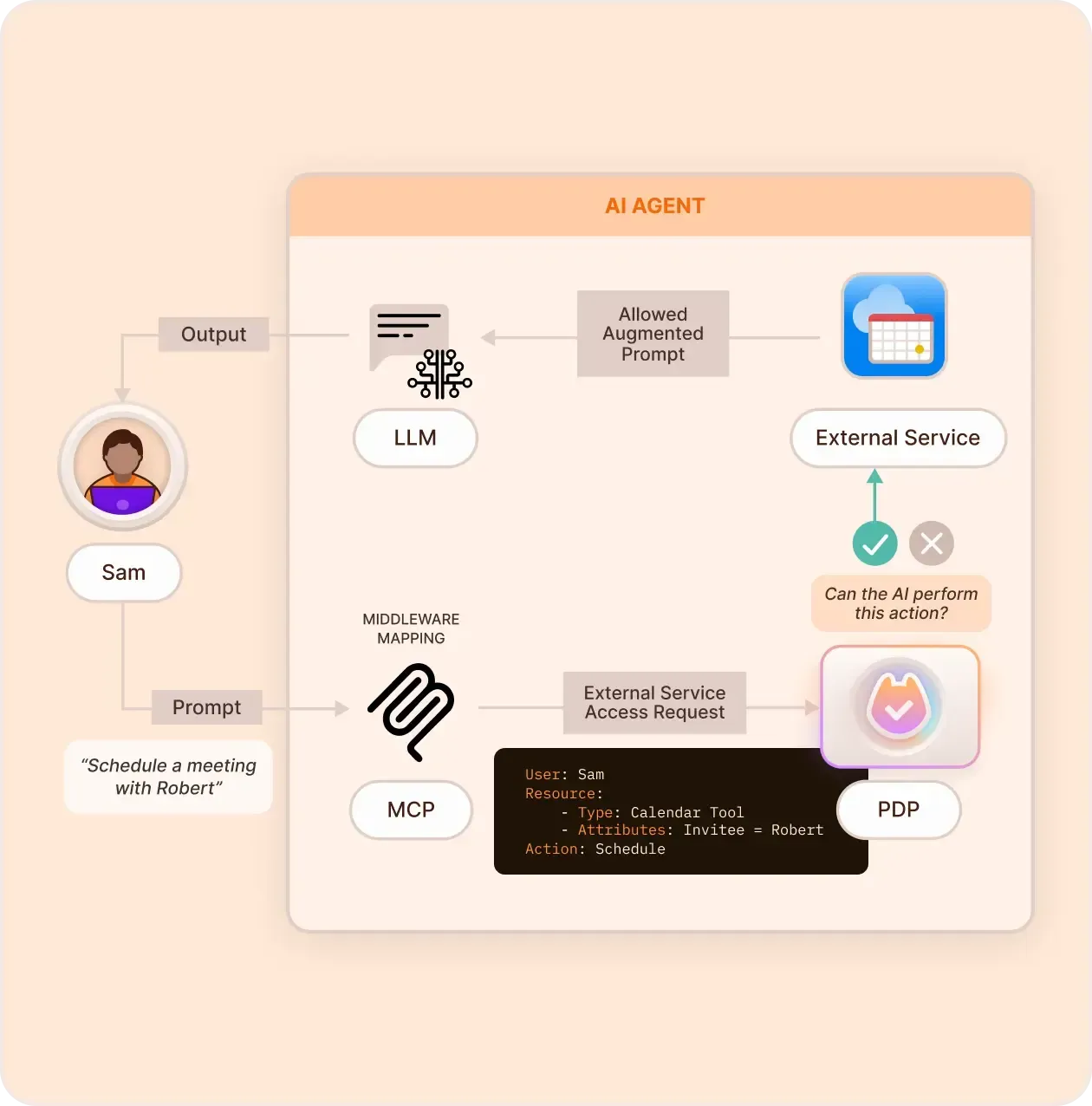

LangChain MCP Adapters connect LangChain agents to real-world access systems (like Permit.io). They convert access request and approval tools into LangChain-compatible tools, so agents can ask for access, but require policy-defined human approval before proceeding. Use it when you're already using LangChain, you want policy-driven access control, or when you’re integrating with Permit MCP workflows.

Permit.io offers a complete authorization-as-a-service layer. With its Model Context Protocol (MCP) server, you can turn access and approval workflows into tools that an LLM can call but only execute after human approval via built-in logic or dashboard controls. Use it when you need to manage sensitive permissions, full auditability, and role enforcement, and want UI + API + agent-level integration.

These tools aren’t mutually exclusive.

In fact, some of the strongest HITL setups use LangGraph + MCP Adapters + Permit.io together for full control, flexibility, and policy enforcement.

Building a human-in-the-loop (HITL) workflow isn’t just about picking a framework—it’s about choosing where, when, and how to involve humans in the agent's decision-making process.

Below are the most effective HITL design patterns used across real-world agentic systems.

Interrupt & Resume:

Used in: LangGraph

How it works: The agent is paused mid-execution using an interrupt() call. Human input is collected (yes/no, select from options, etc.), and then the workflow resumes based on the response.

Best for:

approve_access_request)Human-as-a-Tool:

Used in: LangChain, CrewAI, HumanLayer

How it works: The agent sees a "human" as just another callable tool. When it's unsure, it routes a question to the human tool and uses the returned response in context.

Best for:

Approval Flows:

Used in: Permit.io, ReBAC systems

How it works: Permissions are structured such that only specific human roles (e.g. “Reviewer”) can approve access or actions. Agents can initiate requests, but only users with the right roles can approve them via UI or API.

Best for:

Fallback Escalation:

Used in: Hybrid agent-human systems

How it works: The agent attempts to complete a task. If it fails, lacks permissions, or gets stuck, it escalates the task to a human via Slack, email, or a dashboard for resolution.

Best for:

These patterns are not mutually exclusive. The best HITL architectures often combine:

interrupt() for real-time decisionsapproval roles for policy gatingfallback escalation for graceful recoveryaudit-first for transparencyUse them modularly. And always design around the question:

"Would I be okay if the agent did this without asking me?"

To see all of this in action, let’s take a look at a working example:

A family food ordering system where AI agents handle routine tasks—but humans remain in control of sensitive decisions.

This system demonstrates a real-world implementation of HITL with:

Parents can view and manage all restaurants and dishes.

Children can only view a subset of restaurants.

If a child wants to access a restricted restaurant or order a premium dish:

interrupt() function.This means even though the LLM handles interactions and tool selection, it never executes sensitive actions on its own.

interrupt() ensures decisions are deliberateExplore the full implementation in the open-source repo

As AI agents take on more responsibility in our workflows, building for oversight isn’t optional—it’s foundational. Human-in-the-loop (HITL) systems strike the right balance between speed and safety, automation and accountability.

Whether you're adding simple approval gates or designing complex agent workflows with delegated authority, here are the best practices to follow:

Identify where human input is critical—access approvals, configuration changes, destructive actions—and design explicit checkpoints. Use tools like interrupt() to enforce those pauses.

When asking humans for approval, keep the request clear, focused, and explain why it's needed. Don't overload reviewers with raw JSON—summarize context when possible.

Hardcoding access rules leads to weak, non-scalable logic. Delegate approval logic to a policy engine, where changes are declarative, versioned, and enforceable across systems.

Audit trails aren’t just for compliance—they’re part of the HITL loop. Ensure that every access request, approval, and denial is tracked and reviewable.

Not every human needs to approve something in real time. For low-priority or non-blocking flows, route to async review channels (Slack, email, dashboards) using frameworks like HumanLayer.

Human-in-the-loop is not a temporary workaround—it’s a long-term pattern for building AI agents we can trust. It ensures that LLMs stay within safe operational boundaries, sensitive actions don’t slip through automation, and teams remain in control—even as autonomy grows.

With tools like LangGraph, Permit.io, and LangChain MCP Adapters, it’s easier than ever to give agents the ability to ask for permission—without hardcoding approvals or sacrificing usability.

The best agents aren’t just intelligent. They’re responsible.

Full-Stack Software Technical Leader | Security, JavaScript, DevRel, OPA | Writer and Public Speaker